How I AI: How Tomasz Tunguz digests 36 weekly podcasts without spending 36 hours listening

Tomasz Tunguz shares his terminal-based system for transforming podcasts into actionable insights and blog-worthy content.

Claire Vo

If you’re anything like me, you probably feel like you’re drowning in content. There’s so much to read, watch, and listen to, and it can feel like a full-time job just to keep up. It’s a problem I’m always trying to solve for myself and for you.

That's why I was so excited to talk with **Tomasz Tunguz**, a legend in the enterprise software business and founder of Theory Ventures.

Tomasz is a voracious learner, but he's also incredibly pragmatic. He faces the same challenge many of us do: a ton of valuable information locked away in podcasts, but not enough hours in the day to listen to it all.

His solution is a custom-built, terminal-based tool he calls the "Parakeet Podcast Processor." It digests 36 podcasts a week, pulling out actionable insights, investment ideas, and even drafts for blog posts in his own style. It’s a great example of building hyper-personalized software—the kind of tool that just wasn’t practical to build until recently.

We spent a lot of time talking about this tool—how he built it, and how he keeps making it better.

The "Parakeet Podcast Processor": From Audio to Actionable Insights

Tomasz told me he’d much rather read than listen—a feeling I think a lot of us can relate to, especially when you need to find specific information quickly. He built his "podcast ripper" to solve that exact problem. Every day, his system downloads the latest episodes from 36 of his favorite podcasts, including this one!

Here's how his system works:

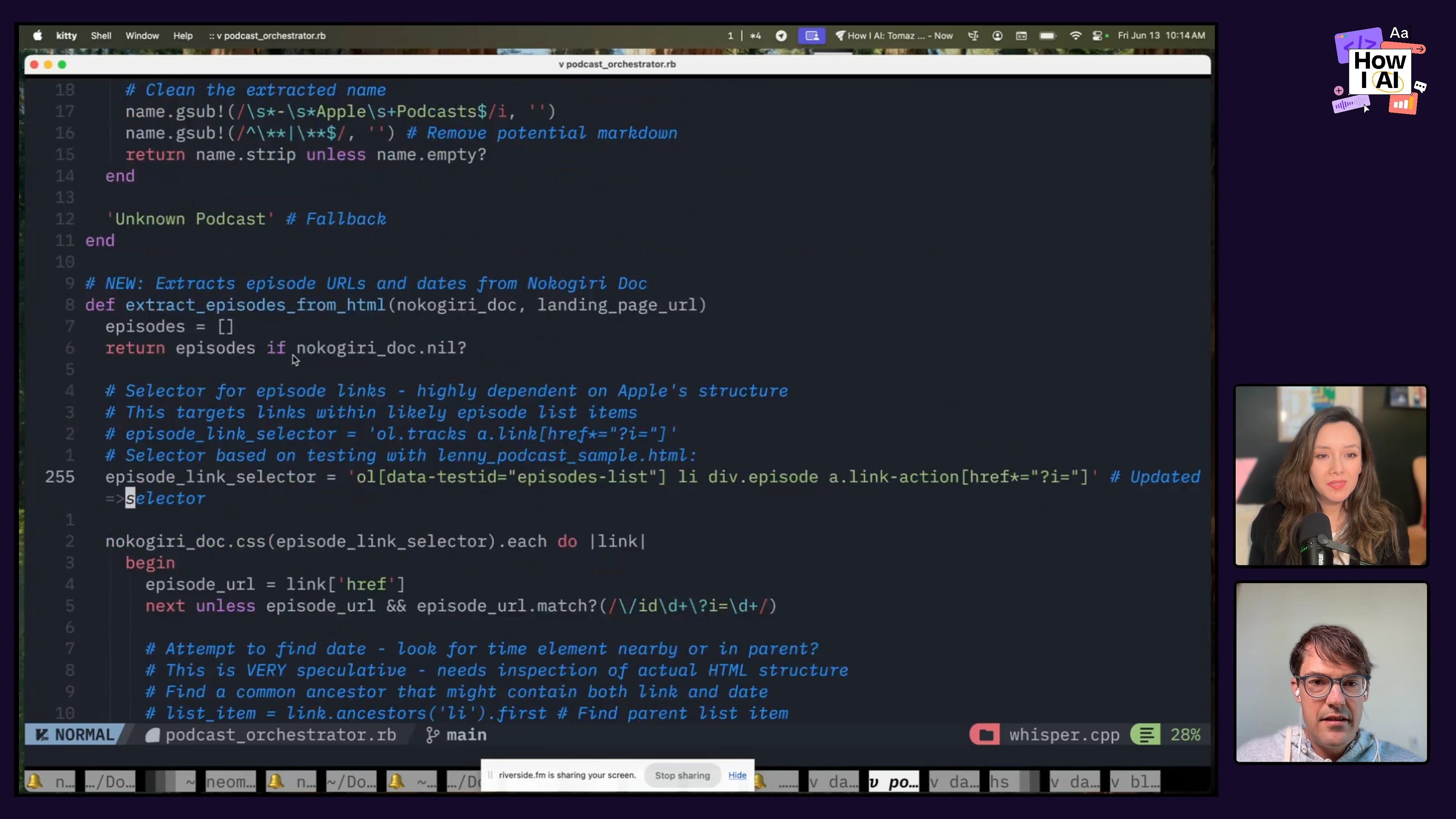

- Download & Transcribe: The system uses ffmpeg to convert audio files to text. Initially, Tomasz relied on OpenAI's open-source Whisper, but he's since transitioned to Nvidia's Parakeet for its excellent local performance on a Mac.

- Clean Transcripts: Once transcribed, the raw text goes through a cleaning process using Gemma 3 (running locally via Ollama). The prompt is simple but effective:

You're a transcript editor. Clean up this podcast while preserving all the content. Keep the same length, remove the ums and the ahs, preserve all technical conversations.- Orchestration & Storage: A "podcast orchestrator" manages the daily processing. Transcripts are stored in a local DuckDB database, keeping track of what's been processed.

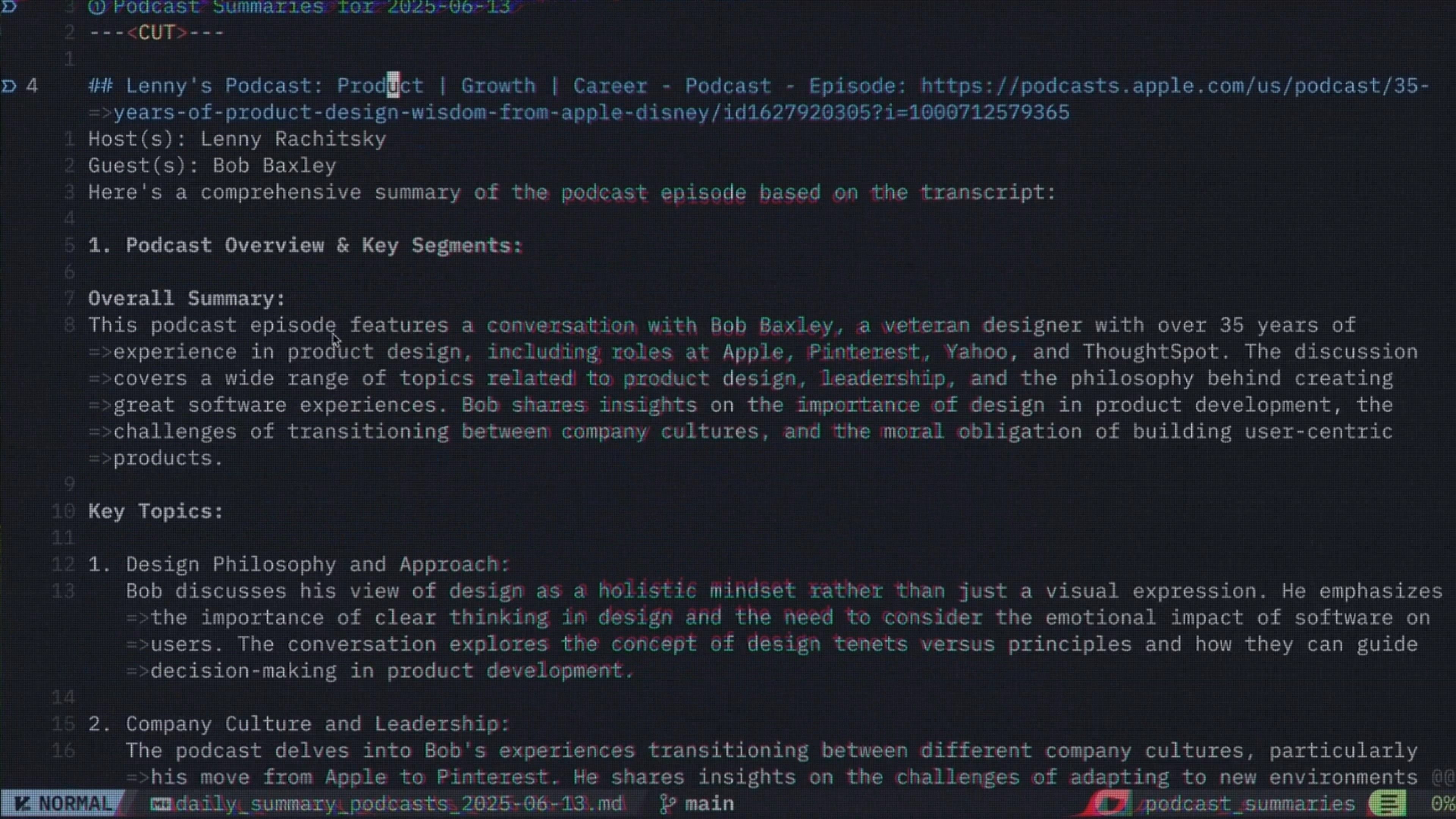

- Daily Summaries & Extraction: This is where the real value comes in. The clean transcripts are fed into a prompt that generates a structured daily summary.

The summary it generates is incredibly detailed. It gives him:

- Host and Guest: Quick context.

- Comprehensive Summary: A high-level overview.

- Key Topics and Themes: Categorized insights from the conversation.

- Actionable Quotes: Specific snippets that resonate or spark ideas.

- Investment Theses: For Theory Ventures, this is invaluable. The system suggests potential areas for market mapping or investment discussions (e.g., "AI assisted design tools").

- Noteworthy Observations: Drafts for potential social media posts.

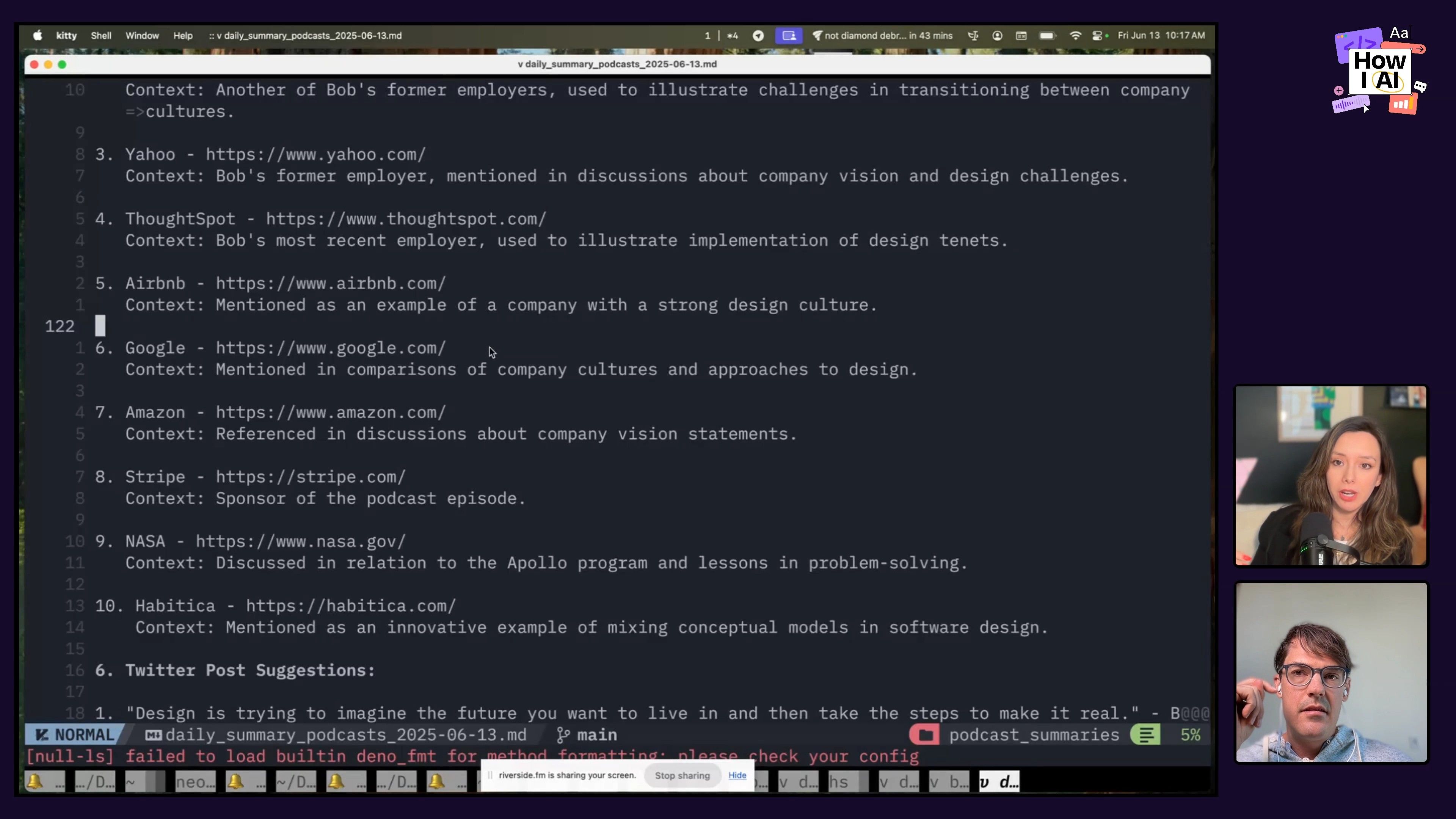

- Company Mentions: Identifies startups or established companies mentioned, which can be fed into a CRM for further research. For this step, Tomasz initially used Stanford's Named Entity Recognition library but found that a larger LLM simply did a better job with less pre-processing.

Why Terminal-Based Tools?

You might have noticed from the screenshots that Tomasz lives in the terminal. My first thought was, "Why not a UI?"

His answer was, as always, incredibly insightful. He referenced Dan Luu's blog post on latency, explaining that the terminal offers the lowest latency, which means less frustration. During COVID, he embraced the terminal as a hobby, and now it's his command center for everything from email to scripting.

I completely agree with him on the power of terminal-based tools like Claude Code. It's an amazing product that shows what thoughtful terminal design can look like. For anyone building dev tools, learning to design for the terminal is a superpower. It allows for constrained, focused interactions that can be incredibly efficient.

What really struck me is how this workflow highlights the power of hyper-personalized software. While many startups are building generic podcast digest apps (and I've seen plenty of people, myself included, build similar things for fun!), Tomasz has crafted an experience that fits his workflow perfectly. If something needs to change, he can jump into Claude Code and update his scripts in seconds. This level of customization, with so little friction, just wasn't efficient or even possible before the current generation of language models. It shows how AI can empower us to build these custom little tools that serve our own specific needs perfectly.

AI as Your AP English Teacher: Crafting Blog Posts

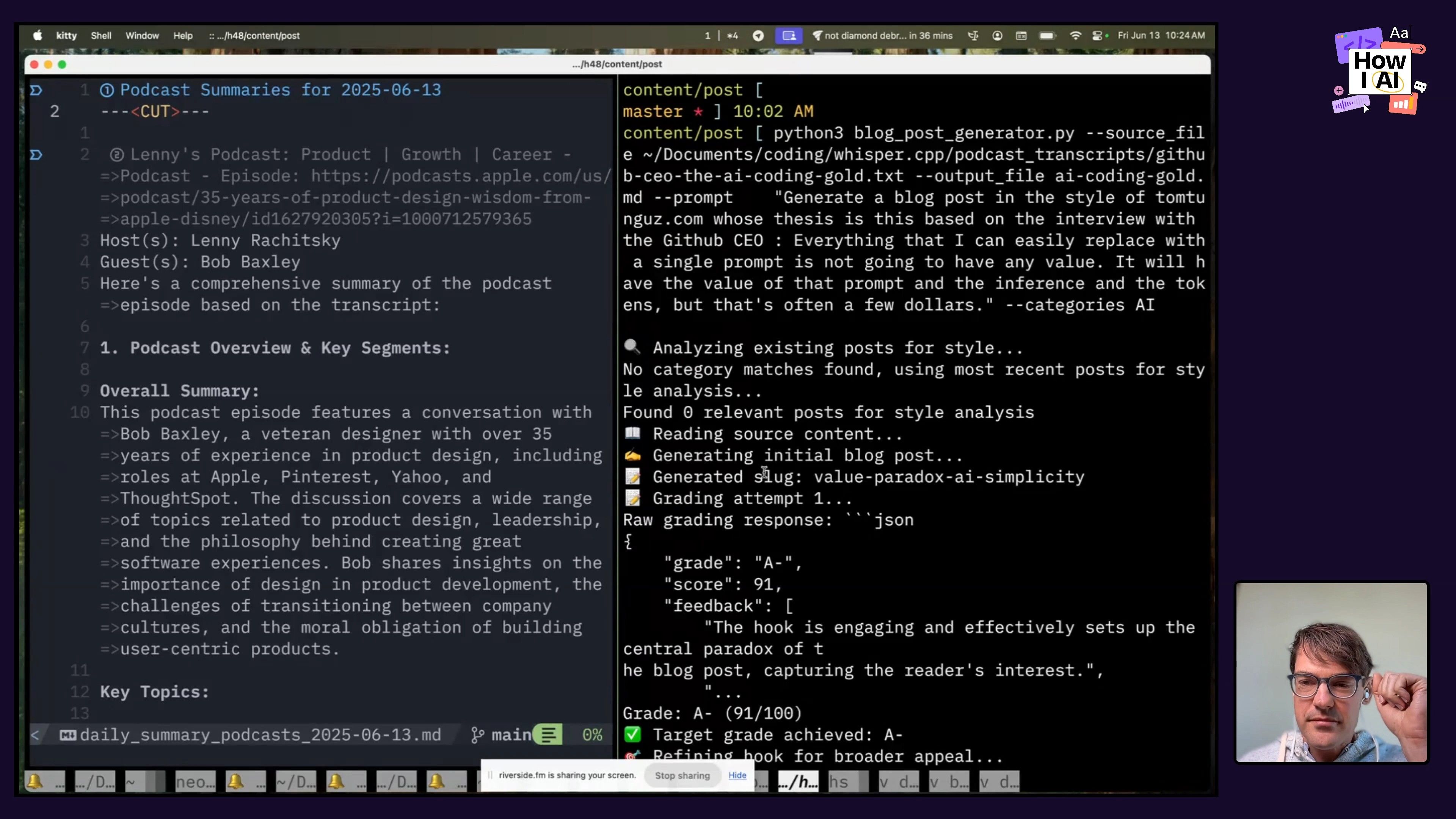

Tomasz doesn't just use AI to consume content; he uses it to create it, too. He has a second workflow that takes the ideas from his podcast summaries (or any other new idea) and turns them into drafts for his blog.

The Blog Post Generation Workflow

- Contextual Generation: Tomasz uses a "podcast generator" script. He feeds it the transcript of a relevant podcast (or just an idea) and a prompt outlining the desired content.

You are an expert blog writer specializing in technology and business content.

Based on the style analysis of existing posts, write in a style that:

f''.join(style_analysis.tone_characteristics)}

Uses paragraphs of approximately {style_analysis.avg_paragraph_length} words

Follows these patterns: {f''.join(style_analysis.common_patterns)}

Hook examples from existing posts:

{chr(10).join('+' + hook['100'] + ' ' for hook in style_analysis.hook_examples)}

CRITICAL REQUIREMENTS:

Write approximately 500 words total

NO section headers or H2/H3 tags - write as continuous flowing prose

Structure as flowing paragraphs that build the argument naturally

Each paragraph should transition smoothly to the next

LIMIT each PARAGRAPH to at most 2 LONG sentences (this is very important)

Use shorter, punchier sentences within paragraphs for better readability

Create a blog post with:

1. Compelling hook that appeals to broad audience (1-2 paragraphs)- Style Matching: This is a crucial, and challenging, step. The system accesses Tomasz's archive of 2,000+ blog posts stored in a

LanceDBvector embeddings database. It dynamically analyzes relevant posts to understand his stylistic patterns, and can even adapt based on the target audience (e.g., Web3 vs. financial analysis).

He's tried fine-tuning models like OpenAI and Gemma, but getting the AI to truly capture his unique voice, rhythm, and even his preference for ampersands or incomplete clauses, is incredibly difficult.

- The "AP English Teacher" Grading System: This might be the most clever part of his whole writing process. After generating an initial draft, Tomasz asks the AI to grade it like an AP English teacher. This goes back to his personal experience: a high school AP English class taught him to love writing through structured feedback.

The Iterative Refinement Process

The AI doesn't just grade once; it goes through three grading attempts to refine the blog post.

The grading prompt is detailed, evaluating:

- Letter grade and numerical score.

- Hook (the crucial opening).

- Argument clarity.

- Evidence and examples.

- Paragraph structure.

- Conclusion strength.

- Overall engagement.

Tomasz told me the AI often critiques his transitions as "too harsh," and he usually loses points for that! While the AI strives for grammatical perfection, it often misses the stylistic nuances that make human writing engaging. He often sees the first grade as an A-, then it might dip into B/B+ territory, and then pop back up. It’s funny to see this explore-exploit behavior in action. The third iteration often helps reinforce brevity after the AI's tendency to get verbose.

AI in Writing Education: A First Pass Filter

This "AP English teacher" approach sparked a bigger conversation about AI's role in education. While many worry about students using AI to write essays, Tomasz suggests a more constructive use: as a first-pass filter.

You're an experienced English teacher. Here's the letter grade numerical score, and then here are the evaluations, the hook, which, you know, argument, clarity, evidence, and examples, paragraph structure, conclusion, strength, overall engagement.AI can quickly handle the "rote analysis of logic of language"—grammar, sentence structure, conjunctions, dangling modifiers. This frees up teachers to focus on the more human, creative, and stylistic aspects of writing, encouraging students to develop their unique voice after mastering the fundamentals.

My advice to students (and anyone struggling with writer's block): instead of asking AI to write for you, ask it to be your critical editor. "If you were my teacher, how would you grade this and what feedback would you give me?" This practical application allows you to develop essential writing skills while taking advantage of AI's instant feedback loop.

The Future of Work & Prompting Techniques

Towards the end of our chat, I asked him a couple of questions about the future:

The 30-Person, $100 Million Company

Tomasz predicts we'll see a 30-person company reach $100 million in revenue by 2025. He envisions a structure with a product-focused CEO, 12-15 engineers, a couple of customer support/devrel people, maybe a salesperson for big contracts, and a solutions architect.

The key? A predominantly software engineering team, a product-led growth (PLG) motion, and significant internal platforms where engineers use AI to gain massive leverage. The ability to rapidly prototype, get AI critiques, test, and push code to production will enable these lean, high-output teams.

Getting AI to Listen: The "AI Duke It Out" Method

I had to ask him: when an AI is being stubborn and not giving him the output he wants, what's his go-to technique? His answer: he makes two AIs duke it out.

He'll present an example of the input, the unwanted AI output, and the desired output, then have models like Gemini and Claude compete to polish the script.

This reminded me of a tip from a previous guest, Hillary, who "negs" the models, saying things like, "Gemini, look at this garbage Claude gave me! Surely you can do better." It's a "mean girls" approach to get them to compete, and it's something I definitely want to try as a weekend project!

Tomasz’s system is an amazing example of using AI for personal productivity. He’s created a custom information flow that’s perfectly tuned to his goals, but he still keeps a human, artistic touch on the final creative work. It feels like a real glimpse into the future of work, and it’s all happening right inside his terminal.

---

Listen to the full episode

Thank you to our sponsors!

Notion—The best AI tools for work

Miro—A collaborative visual platform where your best work comes to life.