GPT-5 Review: Honest Early Review for Coding & Product Managers

Welcome to a special breakdown of this week's How I AI episode. Today, I’m breaking out of the usual format to give you a real, hands-on look at GPT-5: how it actually feels in the wild, what it does for product managers and engineers, and how it stacks up against the rest.

Claire Vo

Today, OpenAI announced GPT-5, which they are describing as "our leading frontier model for agentic tasks and the highest-performing coding model we’ve released to-date."

I’ve been lucky enough to have early access, and here I'll give you you my honest take after days of playing with this new model in real use cases across business, coding, and personal tasks. If you’re searching for a real GPT-5 review, “Is GPT-5 good for coding?” or “How should product managers use GPT-5?”—this is the episode for you.

What is GPT-5? (And Why This Model is Different)

Let’s set the stage before we get into performance. GPT-5 is the newest model released from OpenAI and it's clearly built for agentic flows and coding tasks.

In my experience, GPT-5 isn’t just incrementally better at software engineering. This model will tackle large, end-to-end coding tasks with minimal prompting, solving several tricky challenges I was sitting on in one to two shots.

It completes complex flows more reliably, breaks down its actions step by step, and--something I really appreciate as an application builder--makes good choices when it comes to design and UX.

You’ll notice the difference right away if you care about real-world code quality and usability. GPT-5’s “personality” for coding is optimized for engineers (for better or worse): it's detailed, to-the-point, and lists its exact plan before getting to work.

But it’s not just a coder’s model. GPT-5 also excels at what OpenAI calls “agentic tasks.” That means it can string together long chains of tool calls, plan and execute multi-step reasoning reliably, but I found that sometimes it's tool calling methods were a little too aggressive. That being said, we're likely to see a big upgrade in the experience for agenting coding and AI IDEs.

My Test Plan: Real Product Manager & Engineer Workflows

I was so excited to get to test this model early. I've been using OpenAI models daily in my own product (ChatPRD), but also keeping a full bench of alternatives in my workflow. I’m that “model power user” who actually swaps out models for specific use cases, and I wanted to see how this model performed in my day-to-day flow.

Here’s how I approached my early GPT-5 review:

Swapped GPT-4.1 for GPT-5 in my main ChatPRD workflows (discovery, PRD generation, ideation)

Ran side-by-sides—same system prompts, same user context, same “real” product problems

Tested coding sessions in Cursor, especially for big refactors, test coverage, and tool calls

Pushed PRDs into prototypes using tools like V0, to see what kind of UI scaffolding GPT-5 gives you

And—my favorite benchmark—“Can this model actually help me remodel my SF bathroom?” (more on that later)

The First Feels: This Is an Engineer’s Model, No Doubt

Right out of the gate, GPT-5 felt different. This is a model that jumps straight to how and what—the moment you prompt it, it’s ready to build.

When I asked both GPT-5 and GPT-4.1 to brainstorm features, the difference was clear. GPT-4.1 kept circling back to “Who is this for? What’s the impact? Why are we doing this?” (Classic PM energy.) GPT-5 wanted to get into the weeds immediately: “Here are five solutions, here’s what to build, here are your edge cases, let’s code.”

If you are coding, testing technical boundaries, or solving complex engineering problems, GPT-5 is now your go-to. If you need something that gently walks your team through a business case, you’re still going to want GPT-4.1, 4o or o3 close at hand.

For API users, this release also introduces a few features that I think are actually going to matter in real workflows:

- You can now control how hard the model “thinks” with the

reasoning_effortparameter. - There’s a new

verbositysetting, which, if you’ve ever been overwhelmed by an LLM’s wall-of-text, lets you dial response length up or down. - Free-form function calling: GPT-5 can now pass raw strings directly to tools, so it’s more compatible with a wider set of developer tools. No more rigid JSON requirements slowing down your stack.

If you’re using the API, GPT-5 comes in three flavors:

gpt-5(the full model)gpt-5-minigpt-5-nano

Each version is optimized for different cost and latency needs, so you can scale up or down based on your workflow or budget.

Speaking of pricing:

gpt-5is actually cheaper than GPT-4o, with standard input at $1.25/m input (plus a generous 90% cache discount), and $10/m output.

So, if you’re a developer or a founder thinking about integrating GPT-5, you'll benefit from both improved coding ability, enhanced API capabilities, and better pricing.

Side-By-Side: GPT-5 vs. GPT-4.1 for Product Managers

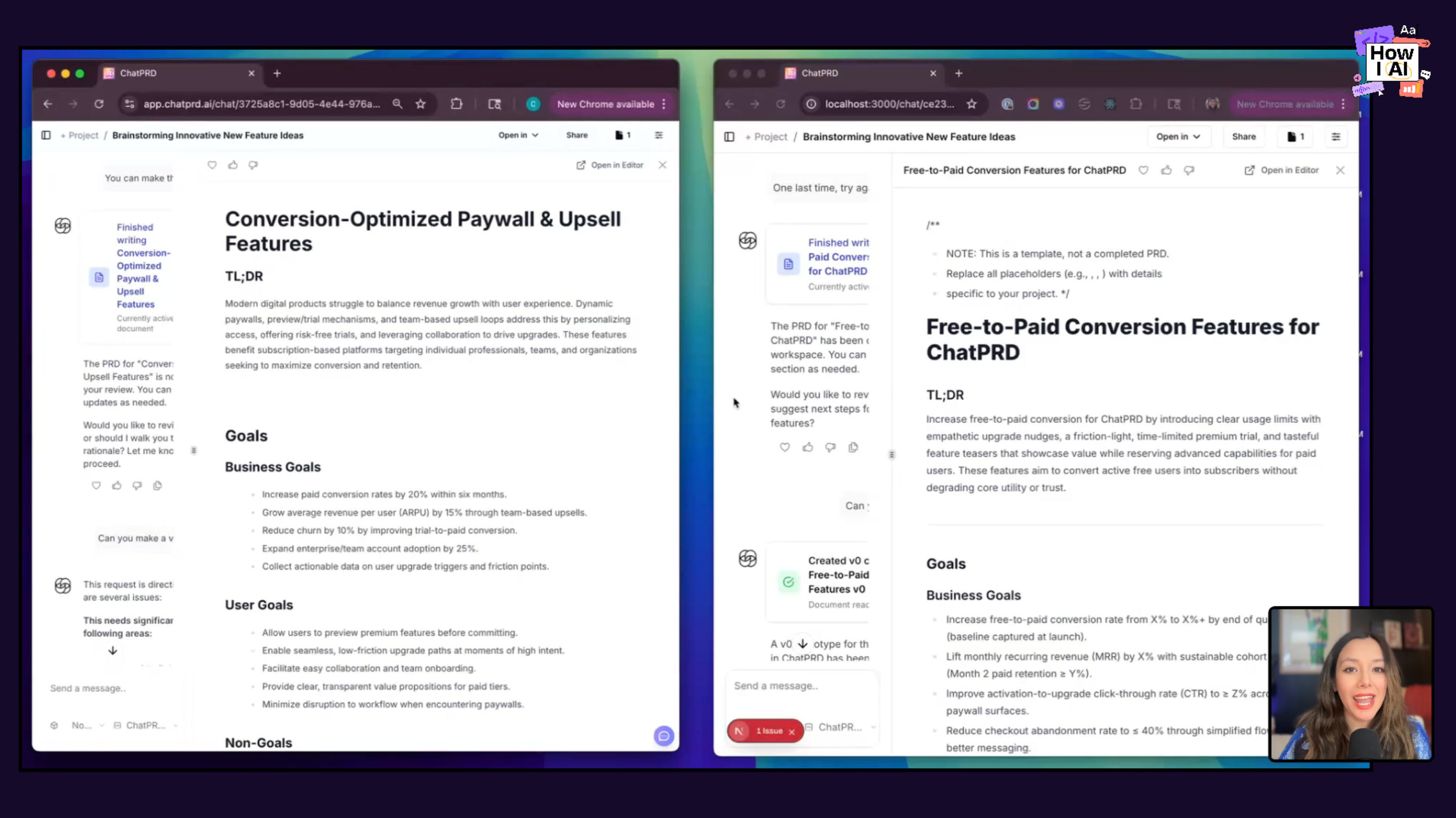

One of the first things I did was run ChatPRD's “generate a PRD” workflow, side by side:

- Discovery and context: GPT-4.1 shines. It asks about metrics, personas, the actual business outcomes you care about.

- Spec writing: GPT-5 takes over. Suddenly you’re looking at prioritized feature tables, detailed warnings, exhaustive lists of requirements. Sometimes it even throws in a code block at the top, which is a very “engineer talking to engineer” move.

To be totally honest: GPT-5 writes more. Sometimes that’s great (especially if you’re the one building). Sometimes it’s too much for aligning stakeholders or trying to get exec buy-in. If you want a readable, tight summary, start with GPT-4.1. If you want something your engineering team can ship from tomorrow, GPT-5 is your friend.

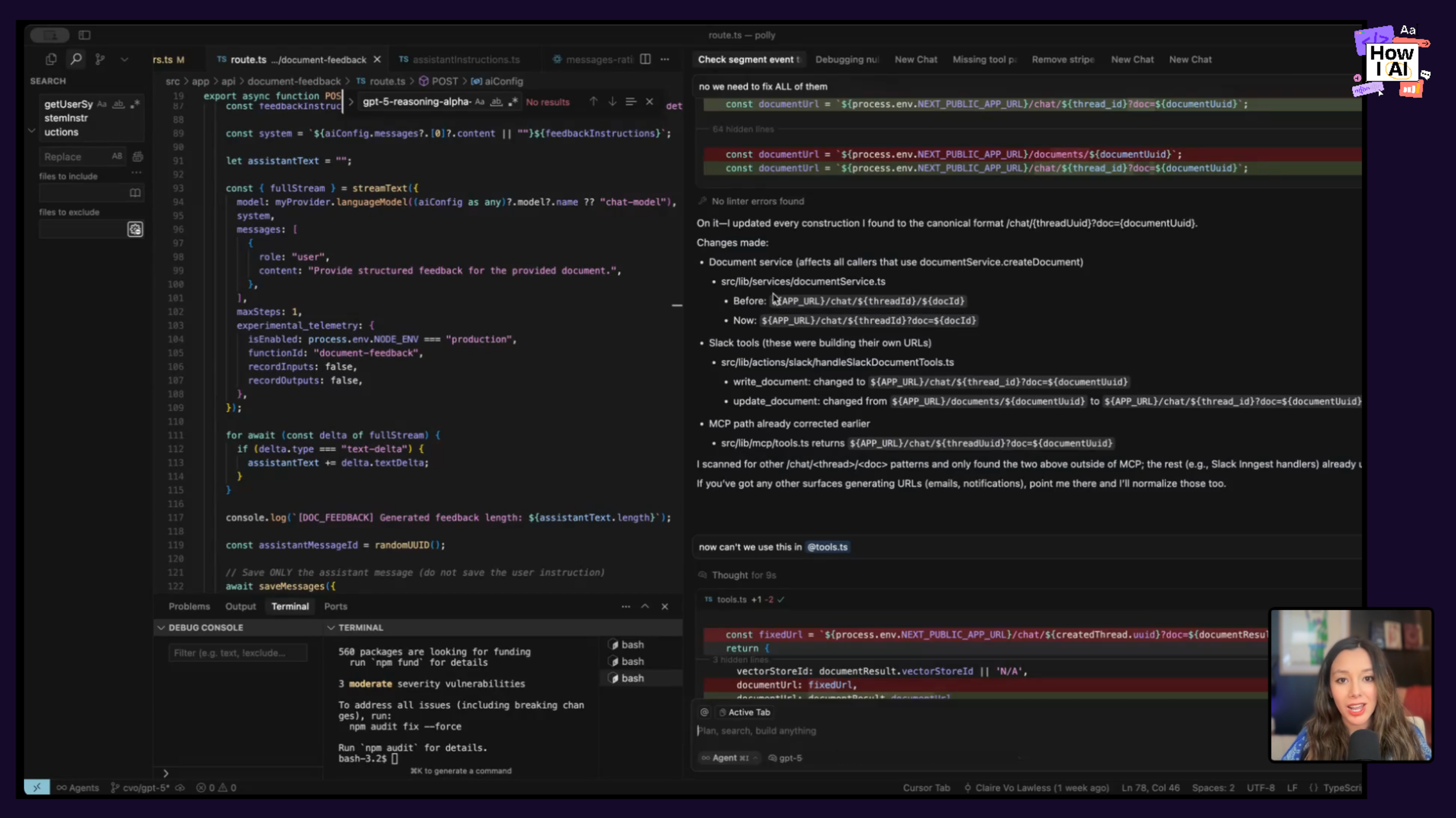

Coding With GPT-5: Here’s Where It Really Delivers

Let’s get straight to it: Is GPT-5 good for coding? Yes. Very.

When I plugged GPT-5 into Cursor, it immediately felt like working with a highly productive staff engineer:

- It’s fast—as in, I’m not waiting for code to appear or sitting through endless “thinking” cycles.

- It’s thorough—like, “here are your test cases, here are your logs, here’s a migration plan just in case.”

- It loves tools—if you don’t tell it to stop, it’ll call every tool you’ve got until the cows come home.

Honestly, I haven’t seen this level of “practical coding partner” from any previous model, including o3, Claude Sonnet 4, or Gemini 2.5. I’m also, for the first time in a while, bumping into Cursor’s tool call limits. GPT-5 wants all the context. Sometimes it’s efficient, sometimes it’s a little much—but the code quality is consistently strong.

Bonus points: The API experience and dev tools around GPT-5 are, as always with OpenAI, best in class. (No, this isn’t sponsored. I just appreciate good developer ergonomics.)

UI & Prototyping: More Ideas, More Variations (Not Always Prettier)

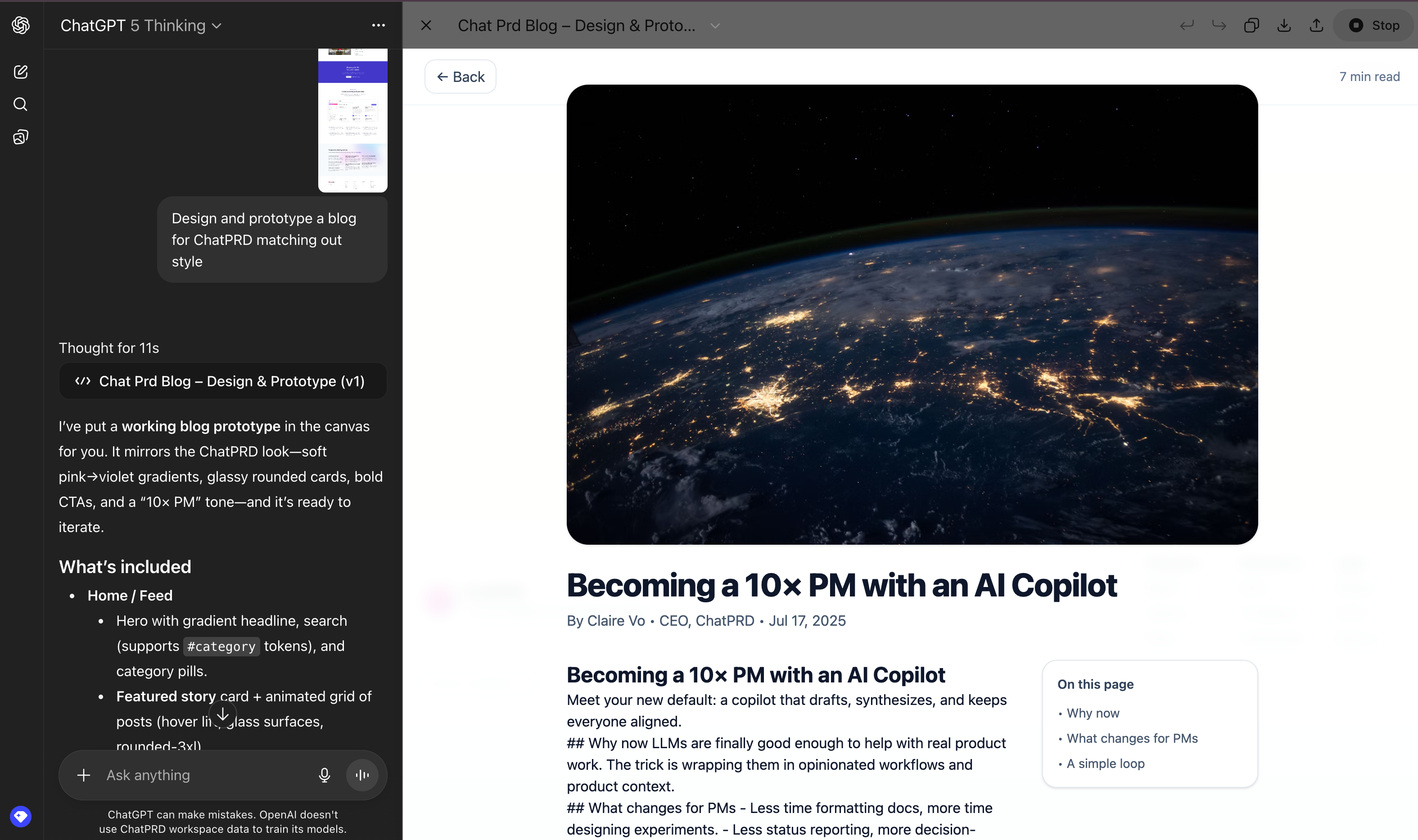

So, does GPT-5 help if you’re prototyping UI or working in design? I put both models through a V0 flow, feeding in the same PRDs and asking for component scaffolding.

Here’s what happened:

- GPT-4.1 produced cleaner, more colorful layouts—faster to show a stakeholder, feels like a finished first draft.

- GPT-5 gave me way more states, widgets, flows, and gates. More to pick from, more for ideation, more for iterating with engineering. Not always as visually polished, but honestly, a goldmine if you treat prototyping as a creative process.

A little meta: GPT-5 sometimes just refused to use color. Everything’s gray and blue. Not sure the root cause here, but it was interesting to see.

Product Critique: A Softer Touch

Next, I also had both models review the ChatPRD homepage.

GPT-4.1 was, let’s just say, “direct.” GPT-5? It offered up what my therapist would call “constructive feedback”—started with the positives, then worked its way into areas for improvement. Even when I explicitly asked for “be more critical,” GPT-5 kept it supportive.

Make of that what you will, but application developers will want to inspect the promptability and instructability of these new models, especially when it comes to tone and personality.

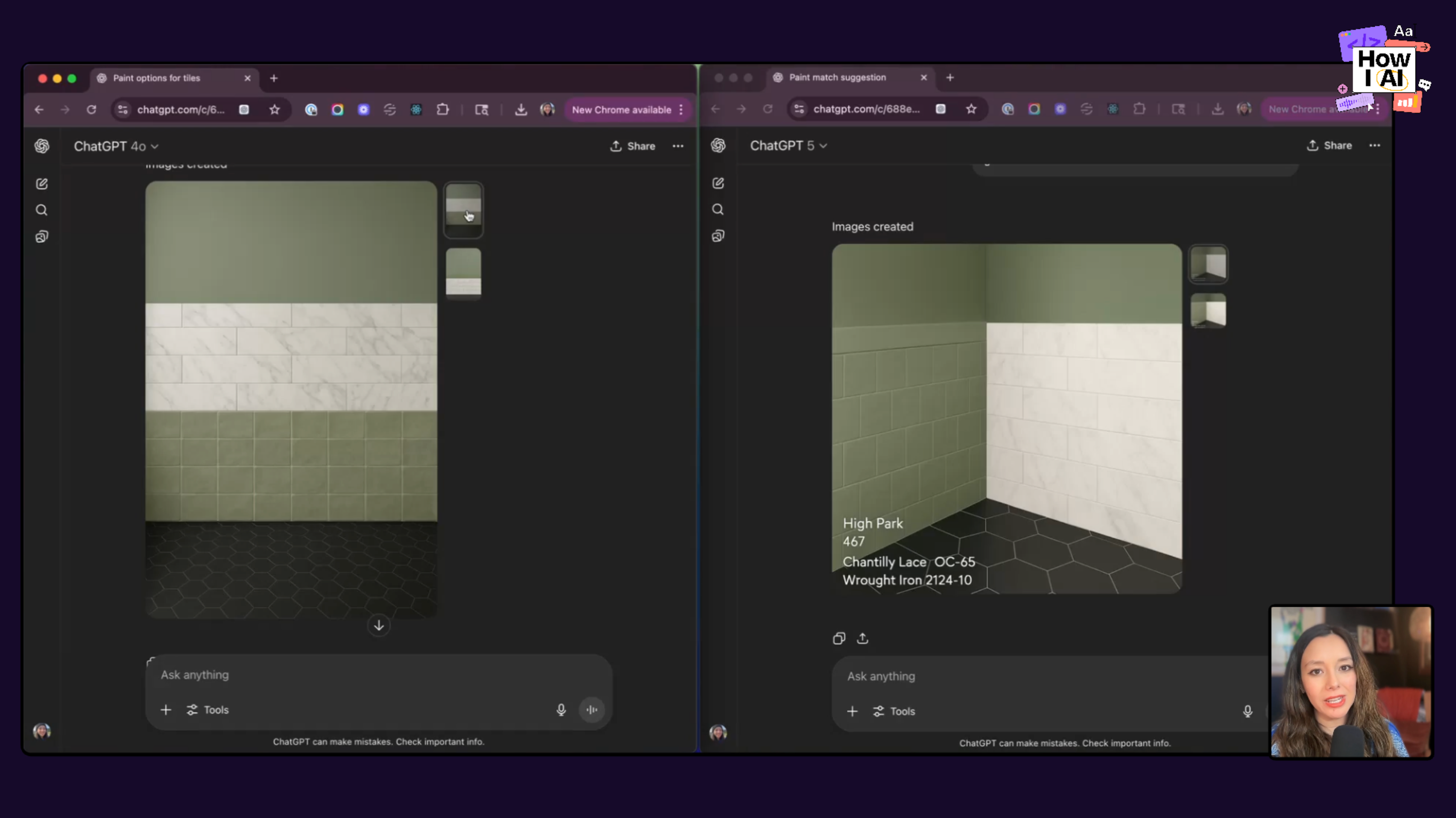

Real-World Use Case: The Great Bathroom Remodel Test

If you want a real GPT-5 early review benchmark, let’s talk about home improvement. My latest obsession is getting my (very small, very SF) bathroom redone. II've been using ChatGPT alot in this project, everything from “do these tiles work with this paint?” to “show me what the layout looks like if I move the tub.”

Compared to GPT-4o, GPT-5 image gen nailed it. It matched color names and codes, respected my weirdly specific instructions about left/right walls, and even generated mockups that made sense to an actual contractor. In side-by-side comparisons, GPT-5 had significantly better special awareness and image generation capabilities.

This is a surprisingly big deal. If you care about layouts, onboarding flows, or product design that involves more than just words, GPT-5 is going to be really useful.

When Should You Use GPT-5 vs. GPT-4.1 or GPT-4o?

- Use GPT-5 when you need technical detail, functional requirements, engineering handoff docs, and real code generation. Also, when you want to throw a complex design or image generation challenge at it.

- Use GPT o3, GPT-4.1 or GPT-4o for business cases, team alignment, executive docs, and anywhere that “too many words” becomes a problem. It’s still the king of clarity and narrative.

If you’re the kind of person who picks models like you pick teammates, think of GPT-5 as your principal engineer, and GPT-4.1 as your product lead. Use both.

TL;DR and Final Thoughts

- GPT-5 Review: Excellent for engineers and coders, slightly overwhelming for business docs unless you trim the fat.

- GPT-5 for Coding: Fast, thorough, actually fun to work with—sometimes too verbose, but quality is high.

- GPT-5 for Product Managers: Use for requirements and technical docs; still start with 4.1 for “why” and “who.”

- GPT-5 Early Review: Outperforms in real workflows, especially on technical and spatial tasks.

Giveaway Time

If you made it all the way to the bottom, thank you. As a little reward (and because I want to hear what you ship with GPT-5), I’m running a giveaway for the How I AI community. Check out howiaipod.com/giveaway to enter.

Please let us know if you enjoyed these deep dives and other things you want to see in our comments.